As the net fills inexorably with AI slop, searchers and search engines like google and yahoo have gotten extra skeptical of content material, manufacturers, and publishers.

Because of generative AI, it’s the best it’s ever been to create, distribute, and discover data. However due to the bravado of LLMs and the recklessness of many publishers, it’s quick changing into the hardest it’s ever been to inform the distinction between real, good data and regurgitated, dangerous data.

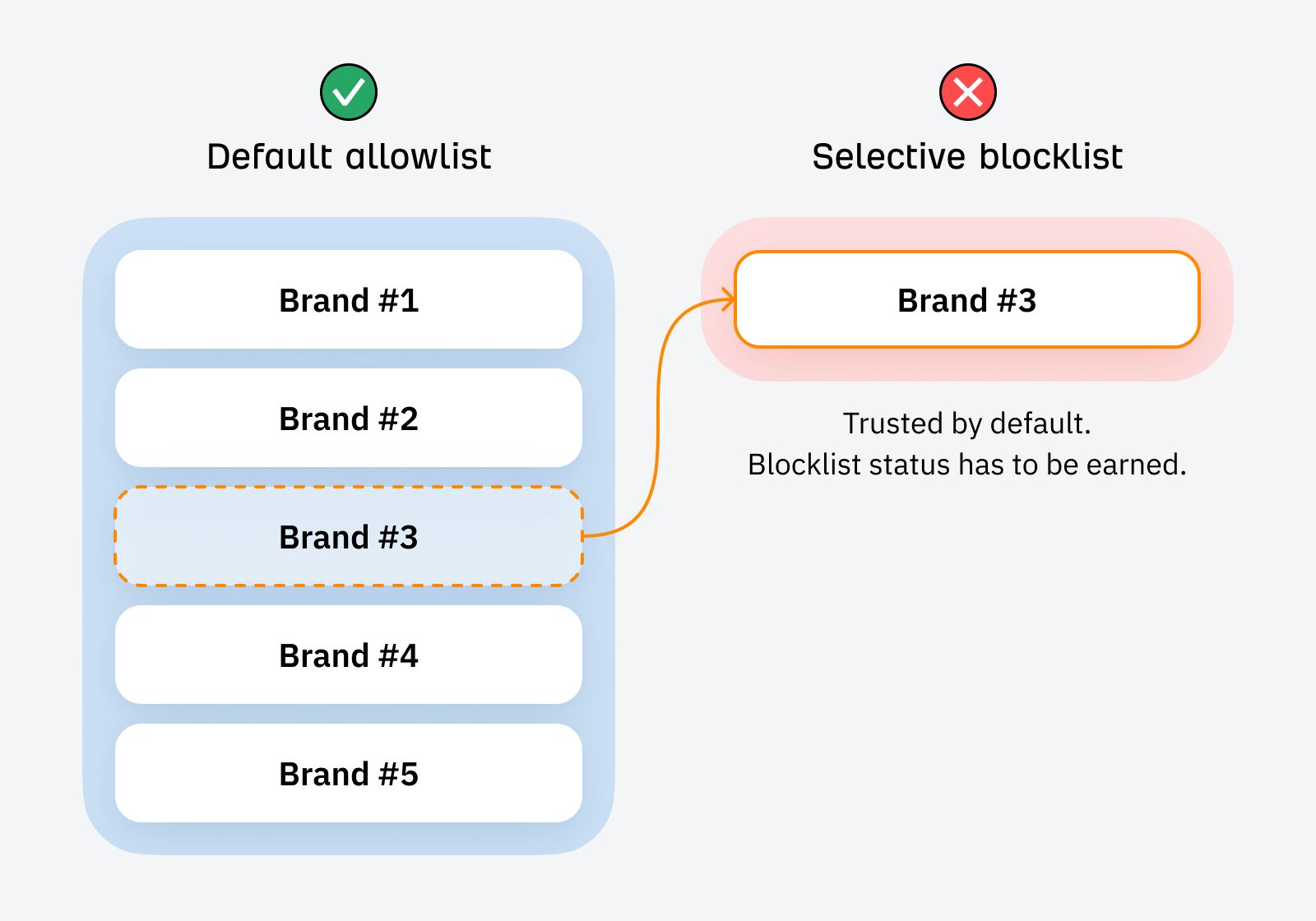

This one-two punch is altering how Google and searchers alike filter data, selecting to mistrust manufacturers and publishers by default. We’re transferring from a world the place belief needed to be misplaced, to 1 the place it needs to be earned.

As SEOs and entrepreneurs, our primary job is to flee the “default blocklist” and earn a spot on the allowlist.

With a lot content material on the web—and a lot of it AI-generated slop—it’s too taxing for individuals or search engines like google and yahoo to guage the veracity and trustworthiness of data on a case-by-case foundation.

We all know that Google needs to filter out AI slop.

Previously 12 months, we’ve seen 5 core updates, three devoted spam updates, and an enormous emphasis on EEAT. As these updates are iterated on, indexing for brand spanking new websites is extremely gradual—and arguably, extra selective—with extra pages caught in Crawled—at the moment not listed purgatory.

However it is a exhausting downside to unravel. AI content material shouldn’t be simple to detect. Some AI content material is nice and helpful (like some human content material is dangerous and ineffective). Google needs to keep away from diluting its index with billions of pages of faulty or repetitive content material—however this dangerous content material seems to be more and more much like good content material.

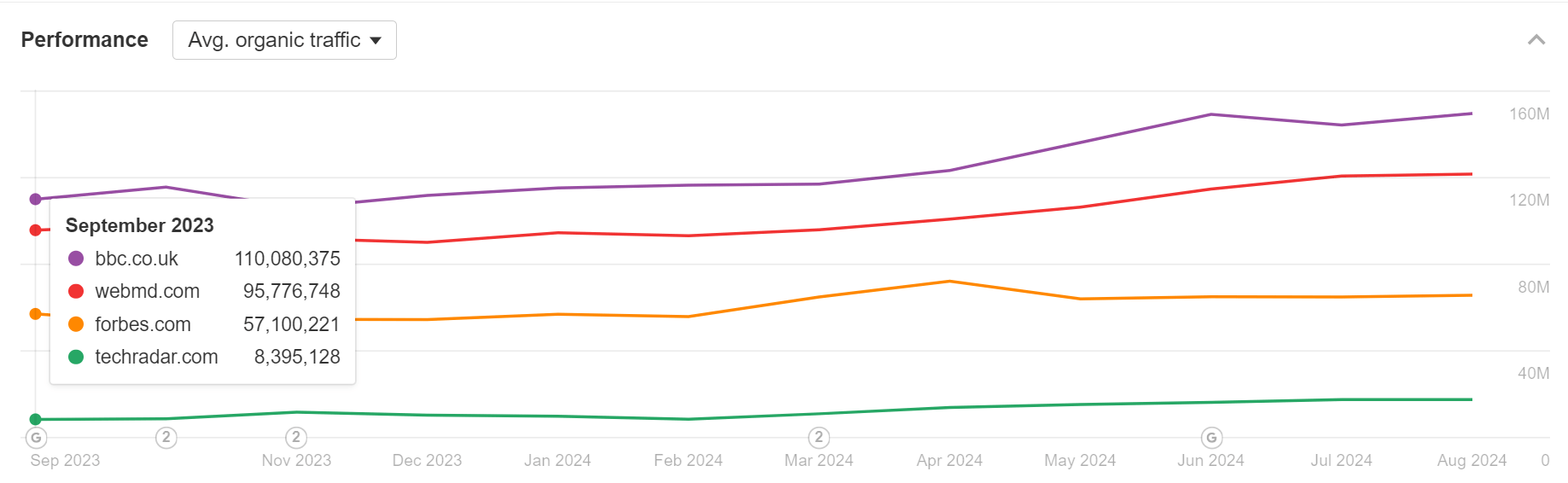

This downside is so exhausting, in truth, that Google has hedged. As an alternative of evaluating the standard of every article, Google appears to have minimize the Gordian knot, selecting as a substitute to raise massive, trusted manufacturers like Forbes, WebMD, TechRadar, or the BBC into many extra SERPs.

In any case, it’s far simpler for Google to police a handful of giant content material manufacturers than many 1000’s of smaller ones. By selling “trusted” manufacturers—manufacturers with some form of monitor document and public accountability—into dominant positions in common SERPs, Google can successfully innoculate many search experiences from the danger of AI slop.

(Worsening the issue of “Forbes slop” within the course of, however Google appears to view it because the lesser of two evils.)

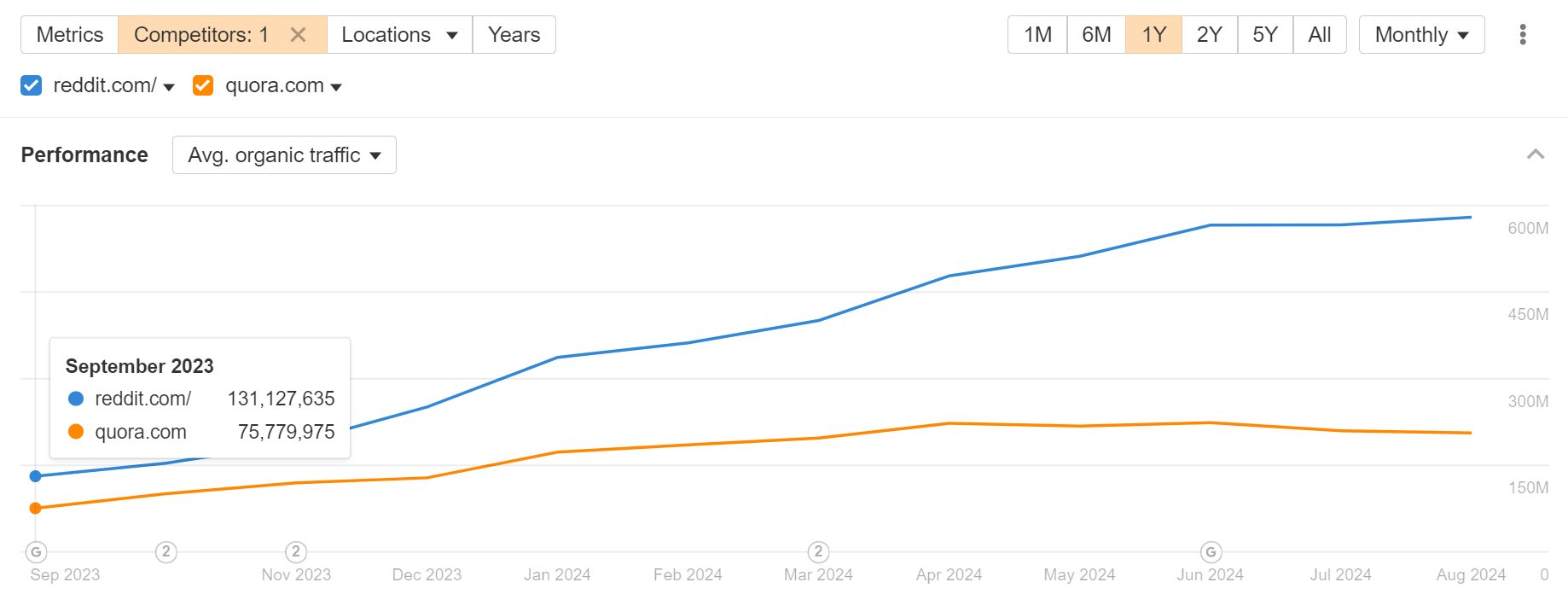

In an analogous vein, UGC websites like Reddit and Quora have their very own inbuilt high quality management mechanisms—upvoting and downvoting—permitting Google to outsource the burden of moderation:

In response to the staggering amount of content material being created, Google appears to be adopting a “default blocklist” mindset, distrusting new data by default, whereas giving desire to a handful of trusted manufacturers and publishers.

Newer, smaller publishers are default blocklisted; firms like Forbes and TechRadar, Reddit and Quora, have been elevated to allowlist standing.

Hitting the “enhance” button for giant manufacturers could also be a short lived measure from Google whereas it improves its algorithms, besides, I believe that is reflective of a broader shift.

As Bernard Huang from Clearscope phrased it in a webinar we ran collectively:

“I believe with the period of the web and now infinite content material, we’re transferring in the direction of a society the place lots of people are default blocklisting every little thing and I’ll select to allowlist, the Superpath neighborhood or Ryan Legislation on Twitter… As a strategy to proceed to get content material that they deem to be high-signal or reliable, they’re turning in the direction of communities and influencers.”

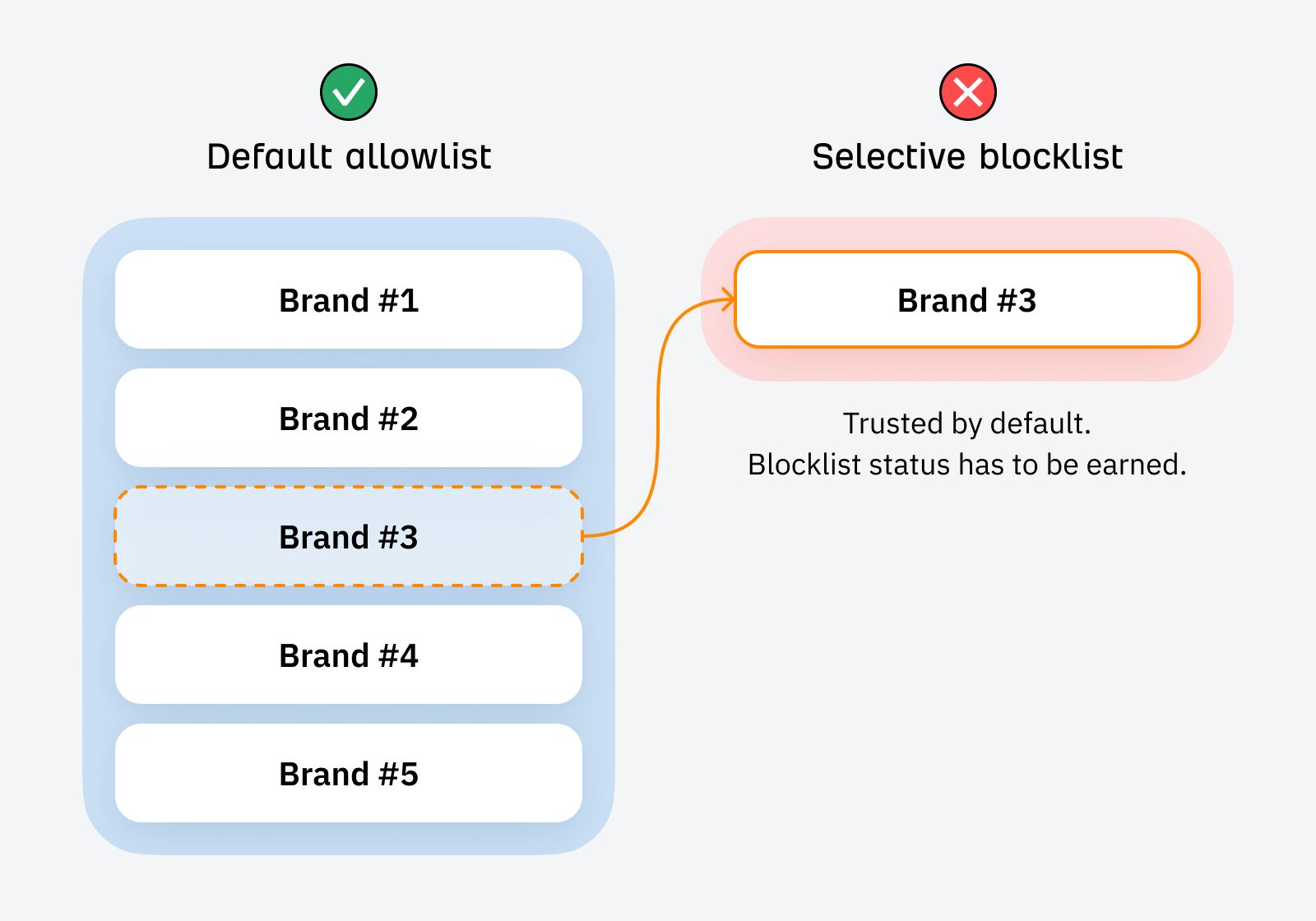

Within the pre-AI period, manufacturers had been trusted by default. They needed to actively violate belief to turn into blocklisted (publishing one thing untrustworthy, or making an apparent factual inaccuracy):

However at the moment, with most manufacturers racing to pump out AI slop, the most secure stance is solely to imagine that each new model encountered is responsible of the identical sin—till confirmed in any other case.

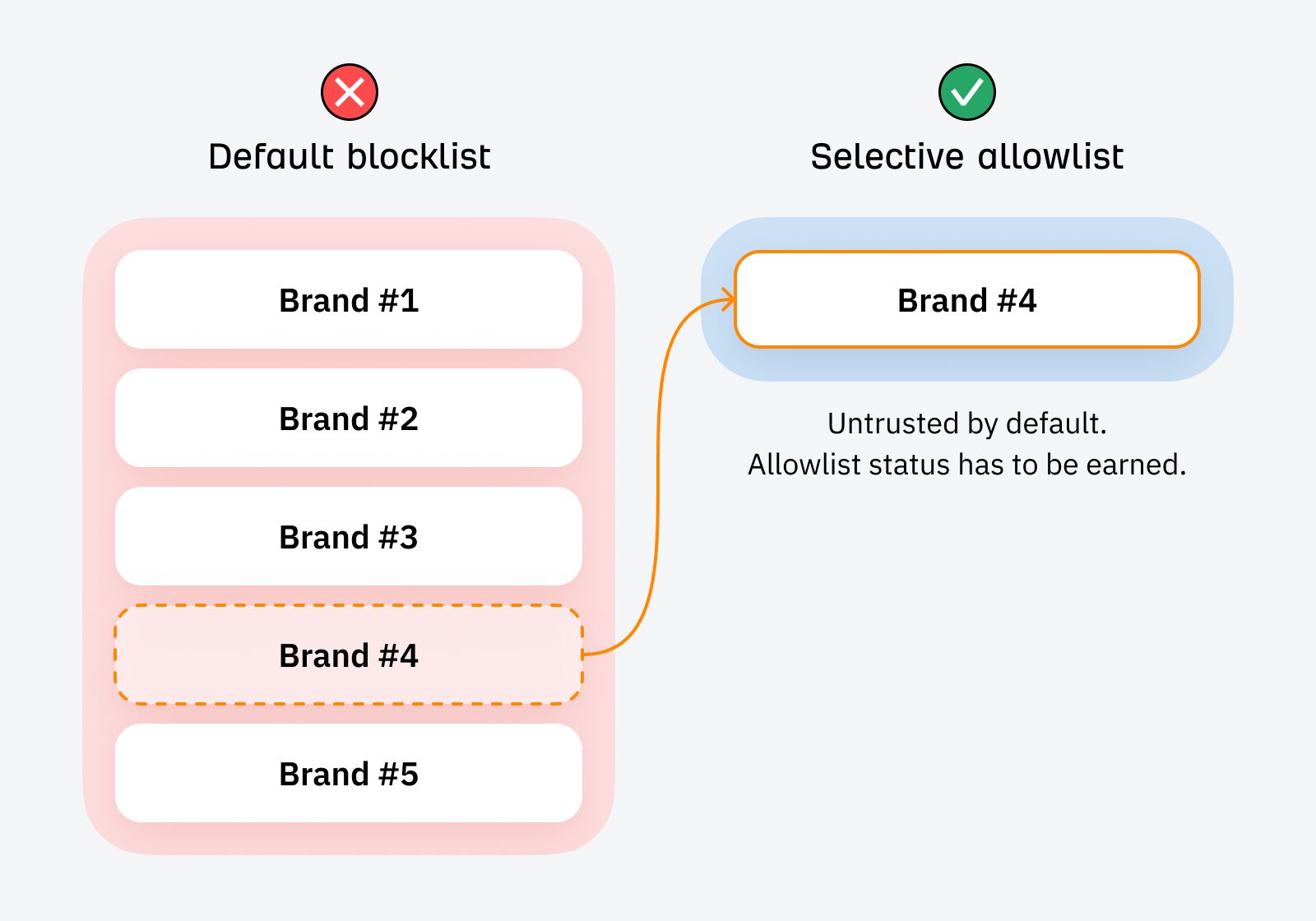

Within the period of data abundance, new content material and types will discover themselves on the default blocklist, and allowlist standing must be earned:

Within the AI period, Google is popping to gatekeepers, trusted entities that may vouch for the credibility and authenticity of content material. Confronted with the identical downside, particular person searchers will too.

Our job is to turn into certainly one of these trusted gatekeepers of data.

Newer, smaller manufacturers at the moment are ranging from a belief deficit.

The de facto advertising playbook within the pre-AI period—merely publishing useful content material—is not sufficient to climb out of the belief deficit and transfer from blocklist to allowlist. The sport has modified. The advertising methods that allowed Forbes et al to construct their model moat gained’t work for firms at the moment.

New manufacturers must transcend rote data sharing, and pair it with a transparent demonstration of credibility.

They should sign very clearly that thought and energy have been expended within the creation of content material; present that they care concerning the consequence of what they publish (and are prepared to endure any penalties ensuing from it); and make their motivations for creating content material crystal clear.

Meaning:

- Be selective with what you publish. Don’t be a jack-of-all-trades; concentrate on subjects the place you possess credibility. Measure your self as a lot by what you don’t publish as what you do.

- Create content material that aligns with your corporation mannequin. Coupon code and affiliate spam subdirectories usually are not useful for incomes the belief of skeptical searchers (or Google).

- Keep away from “content material websites”. Most of the websites hit hardest by the HCU had been “content material websites” that existed solely to monetize web site visitors. Content material shall be extra credible when it helps an actual, tangible product.

- Make your motivations crystal clear. Make it apparent who you’re, why (and the way) you’ve created your content material, and the way you profit.

- Add one thing distinctive and proprietary to every little thing you publish. This doesn’t must be difficult: run easy experiments, make investments better effort than your rivals, and anchor every little thing in first-hand expertise (I’ve written about this intimately right here.)

- Get actual individuals to creator your content material. Encourage them to indicate off their credentials via images, anecdotes, and creator bios.

- Construct private manufacturers. Flip your faceless firm model into one thing related to actual, respiratory individuals.

- Use Google’s gatekeepers to your benefit. If Google is telling you that it actually trusts Reddit content material, effectively… possibly you must strive distributing your content material and concepts via Reddit?

- Turn out to be a gatekeeper to your viewers. What wouldn’t it imply to turn into a trusted gatekeeper to your viewers? Restrict what you share, fastidiously curate third-party content material, and be prepared to vouch for something you publish.

Closing ideas

The blocklist shouldn’t be a literal blocklist, however it’s a helpful psychological mannequin for understanding the affect of AI era in search.

The web has been poisoned by AI content material; every little thing created henceforth lives underneath the shadow of suspicion. So settle for that you’re ranging from a spot of suspicion. How will you earn the belief of Google and searchers alike?