At Microsoft Ignite, we’re introducing important updates throughout our whole cloud and AI infrastructure.

The muse of Microsoft’s AI developments is its infrastructure. It was customized and constructed from the bottom as much as energy a few of the world’s most generally used and demanding providers. Whereas generative AI is now remodeling how companies function, we’ve been on this journey for over a decade creating our infrastructure and designing our programs and reimagining our method from software program to silicon. The top-to-end optimization that kinds our programs method offers organizations the agility to deploy AI able to remodeling their operations and industries.

From agile startups to multinational firms, Microsoft’s infrastructure provides extra selection in efficiency, energy, and price effectivity in order that our prospects can proceed to innovate. At Microsoft Ignite, we’re introducing important updates throughout our whole cloud and AI infrastructure, from developments in chips and liquid cooling, to new knowledge integrations, and extra versatile cloud deployments.

Unveiling the newest silicon updates throughout Azure infrastructure

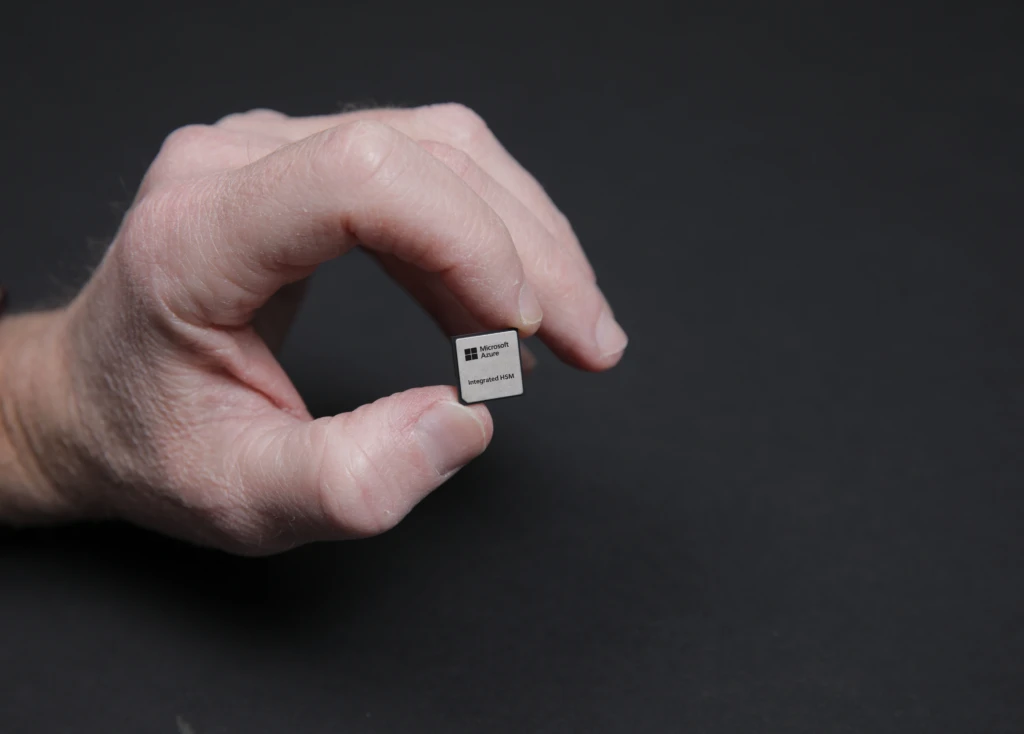

As a part of our programs method in optimizing each layer in our infrastructure, we proceed to mix one of the best of {industry} and innovate from our personal distinctive views. Along with Azure Maia AI accelerators and Azure Cobalt central processing models (CPUs), Microsoft is increasing our customized silicon portfolio to additional improve our infrastructure to ship extra effectivity and safety. Azure Built-in HSM ({hardware} safety module) is our latest in-house safety chip, which is a devoted {hardware} safety module that hardens key administration to permit encryption and signing keys to stay inside the bounds of the HSM, with out compromising efficiency or growing latency. Azure Built-in HSM will probably be put in in each new server in Microsoft’s datacenters beginning subsequent 12 months to improve safety throughout Azure’s {hardware} fleet for each confidential and general-purpose workloads.

We’re additionally introducing Azure Increase DPU, our first in-house DPU designed for data-centric workloads with excessive effectivity and low energy, able to absorbing a number of elements of a standard server right into a single devoted silicon. We count on future DPU geared up servers to run cloud storage workloads at 3 times much less energy and 4 occasions the efficiency in comparison with present servers.

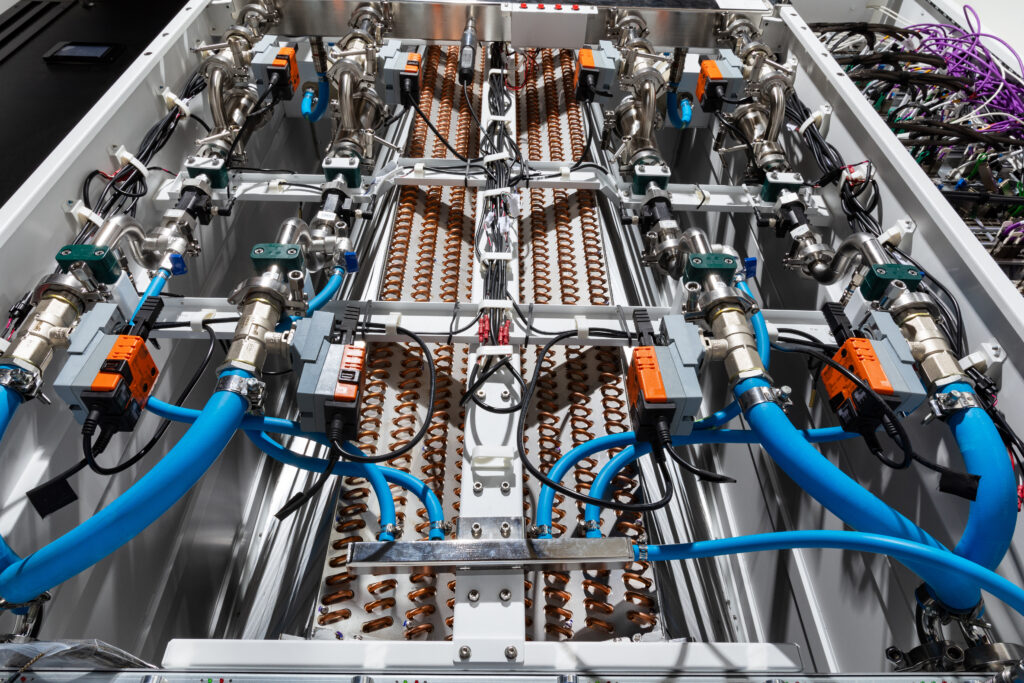

We additionally proceed to advance our cooling expertise for GPUs and AI accelerators with our subsequent technology liquid cooling “sidekick” rack (warmth exchanger unit) supporting AI programs comprised of silicon from {industry} leaders in addition to our personal. The unit will be retrofitted into Azure datacenters to assist cooling of large-scale AI programs, akin to ones from NVIDIA together with GB200 in our AI Infrastructure.

Along with cooling, we’re optimizing how we ship energy extra effectively to fulfill the evolving calls for of AI and hyperscale programs. We’ve collaborated with Meta on a brand new disaggregated energy rack design, aimed toward enhancing flexibility and scalability as we usher in AI infrastructure into our present datacenter footprint. Every disaggregated energy rack will function 400-volt DC energy that allows as much as 35% extra AI accelerators in every server rack, enabling dynamic energy changes to fulfill the completely different calls for of AI workloads. We’re open sourcing these cooling and energy rack specs by way of the Open Compute Undertaking in order that the {industry} can profit.

Azure’s AI infrastructure builds on this innovation on the {hardware} and silicon layer to energy a few of the most groundbreaking AI developments on this planet, from revolutionary frontier fashions to massive scale generative inferencing. In October, we introduced the launch of the ND H200 V5 Digital Machine (VM) sequence, which makes use of NVIDIA’s H200 GPUs with enhanced reminiscence bandwidth. Our steady software program optimization efforts throughout these VMs means Azure delivers efficiency enhancements technology over technology. Between NVIDIA H100 and H200 GPUs that efficiency enchancment charge was twice that of the {industry}, demonstrated throughout {industry} benchmarking.

We’re additionally excited to announce that Microsoft is bringing the NVIDIA Blackwell platform to the cloud. We’re starting to convey these programs on-line in preview, co-validating and co-optimizing with NIVIDIA and different AI leaders. Azure ND GB200 v6 will probably be a brand new AI optimized Digital Machines sequence and combines the NVIDIA GB200 NVL 72 rack-scale design with state-of-the-art Quantum InfiniBand networking to attach tens of 1000’s of Blackwell GPUs to ship AI supercomputing efficiency at scale.

We’re additionally sharing immediately our newest developments in CPU-based supercomputing, the Azure HBv5 digital machine. Powered by customized AMD EPYCTM 9V64H processors solely accessible on Azure, these VMs will probably be as much as eight occasions sooner than the newest bare-metal and cloud options on quite a lot of HPC workloads, and as much as 35 occasions sooner than on-premises servers on the finish of their lifecycle. These efficiency enhancements are made doable by 7 TB/s of reminiscence bandwidth from excessive bandwidth reminiscence (HBM) and probably the most scalable AMD EPYC server platform thus far. Clients can now join the preview of HBv5 digital machines, which can start in 2025.

Accelerating AI innovation by way of cloud migration and modernization

To get probably the most from AI, organizations must combine knowledge residing of their important enterprise functions. Migrating and modernizing these functions to the cloud helps allow that integration and paves the trail to sooner innovation whereas delivering improved efficiency and scalability. Selecting Azure means deciding on a platform that natively helps all of the mission-critical enterprise functions and knowledge you might want to totally leverage superior applied sciences like AI. This contains your workloads on SAP, VMware, and Oracle, in addition to open-source software program and Linux.

For instance, 1000’s of consumers run their SAP ERP functions on Azure and we’re bringing distinctive innovation to those organizations akin to the combination between Microsoft Copilot and SAP’s AI assistant Joule. Firms like L’Oreal, Hilti, Unilever, and Zeiss have migrated their mission-critical SAP workloads to Azure to allow them to innovate sooner. And for the reason that launch of Azure VMware Resolution, we’ve been working to assist prospects globally with geographic enlargement. Azure VMware Resolution is now accessible in 33 areas, with assist for VMware VCF transportable subscriptions.

We’re additionally frequently bettering Oracle Database@Azure to raised assist the mission-critical Oracle workloads of our enterprise prospects. Clients like The Craneware Group and Vodafone have adopted Oracle Database@Azure to learn from its excessive efficiency and low latency, which permits them to concentrate on streamlining their operations and to get entry to superior safety, knowledge governance, and AI capabilities within the Microsoft Cloud. We’re asserting immediately Microsoft Purview helps Oracle Database@Azure for complete knowledge governance and compliance capabilities that organizations can use to handle, safe, and observe knowledge throughout Oracle workloads.

Moreover, Oracle and Microsoft plan to offer Oracle Exadata Database Service on Exascale Infrastructure in Oracle Database@Azure for hyper-elastic scaling and pay-per-use economics. Moreover, we’ve expanded the provision of Oracle Database@Azure to a complete of 9 areas and enhanced Microsoft Cloth integration with Open Mirroring capabilities.

To make it simpler emigrate and modernize your functions to the cloud, beginning immediately, you possibly can assess your software’s readiness for Azure utilizing Azure Migrate. The brand new software conscious methodology offers technical and enterprise insights that can assist you migrate whole software with all dependencies as one.

Optimizing your operations with an adaptive cloud for enterprise progress

Azure’s multicloud and hybrid method, or adaptive cloud, integrates separate groups, distributed places, and various programs right into a single mannequin for operations, safety, functions, and knowledge. This permits organizations to make the most of cloud-native and AI applied sciences to function throughout hybrid, multicloud, edge, and IoT environments. Azure Arc performs an vital position on this method by extending Azure providers to any infrastructure and supporting organizations with managing their workloads and working throughout completely different environments. Azure Arc now has over 39,000 prospects throughout each {industry}, together with La Liga, Coles, and The World Financial institution.

We’re excited to introduce Azure Native, a brand new, cloud-connected, hybrid infrastructure providing provisioned and managed in Azure. Azure Native brings collectively Azure Stack capabilities into one unified platform. Powered by Azure Arc, Azure Native can run containers, servers and Azure Digital Desktop on Microsoft-validated {hardware} from Dell, HPE, Lenovo, and extra. This unlocks new prospects to fulfill customized latency, close to real-time knowledge processing, and compliance necessities. Azure Native comes with enhanced default safety settings to guard your knowledge and versatile configuration choices, like GPU-enabled servers for AI inferencing.

We not too long ago introduced the overall availability of Home windows Server 2025, with new options that embody simpler upgrades, superior safety, and capabilities that allow AI and machine studying. Moreover, Home windows Server 2025 is previewing a hotpatching subscription possibility enabled by Azure Arc that can enable organizations to put in updates with fewer restarts—a serious time saver.

We’re additionally asserting the preview of SQL Server 2025, an enterprise AI-ready database platform that leverages Azure Arc to ship cloud agility wherever. This new model continues its industry-leading safety and efficiency and has AI built-in, simplifying AI software growth and retrieval augmented technology (RAG) patterns with safe, performant, and easy-to-use vector assist. With Azure Arc, SQL Server 2025 provides cloud capabilities to assist prospects higher handle, safe, and govern SQL property at scale throughout on-premises and cloud.

Remodel with Azure infrastructure to realize cloud and AI success

Profitable transformation with AI begins with a robust, safe, and adaptive infrastructure technique. And as you evolve, you want a cloud platform that adapts and scales together with your wants. Azure is that platform, offering the optimum setting for integrating your functions and knowledge so as to begin innovating with AI. As you design, deploy, and handle your setting and workloads on Azure, you may have entry to finest practices and industry-leading technical steering that can assist you speed up your AI adoption and obtain your corporation objectives.

Jumpstart your AI journey at Microsoft Ignite

Key periods at Microsoft Ignite

Uncover extra bulletins at Microsoft Ignite

Assets for AI transformation