Immediately, I’m delighted to share the launch of the Coalition for Safe AI (CoSAI). CoSAI is an alliance of business leaders, researchers, and builders devoted to enhancing the safety of AI implementations. CoSAI operates beneath the auspices of OASIS Open, the worldwide requirements and open-source consortium.

CoSAI’s founding members embrace business leaders similar to OpenAI, Anthropic, Amazon, Cisco, Cohere, GenLab, Google, IBM, Intel, Microsoft, Nvidia, Wiz, Chainguard, and PayPal. Collectively, our objective is to create a future the place expertise isn’t solely cutting-edge but in addition secure-by-default.

CoSAI’s Scope & Relationship to Different Initiatives

CoSAI enhances present AI initiatives by specializing in learn how to combine and leverage AI securely throughout organizations of all sizes and all through all phases of improvement and utilization. CoSAI collaborates with NIST, Open-Supply Safety Basis (OpenSSF), and different stakeholders by way of collaborative AI safety analysis, finest observe sharing, and joint open-source initiatives.

CoSAI’s scope contains securely constructing, deploying, and working AI methods to mitigate AI-specific safety dangers similar to mannequin manipulation, mannequin theft, information poisoning, immediate injection, and confidential information extraction. We should equip practitioners with built-in safety options, enabling them to leverage state-of-the-art AI controls while not having to develop into specialists in each aspect of AI safety.

The place doable, CoSAI will collaborate with different organizations driving technical developments in accountable and safe AI, together with the Frontier Mannequin Discussion board, Partnership on AI, OpenSSF, and ML Commons. Members, similar to Google with its Safe AI Framework (SAIF), could contribute present work by way of thought management, analysis, finest practices, tasks, or open-source instruments to reinforce the associate ecosystem.

Collective Efforts in Safe AI

Securing AI stays a fragmented effort, with builders, implementors, and customers usually going through inconsistent and siloed tips. Assessing and mitigating AI-specific dangers with out clear finest practices and standardized approaches is a problem, even for essentially the most skilled organizations.

Safety requires collective motion, and the easiest way to safe AI is with AI. To take part safely within the digital ecosystem — and safe it for everybody — people, builders, and firms alike must undertake frequent safety requirements and finest practices. AI isn’t any exception.

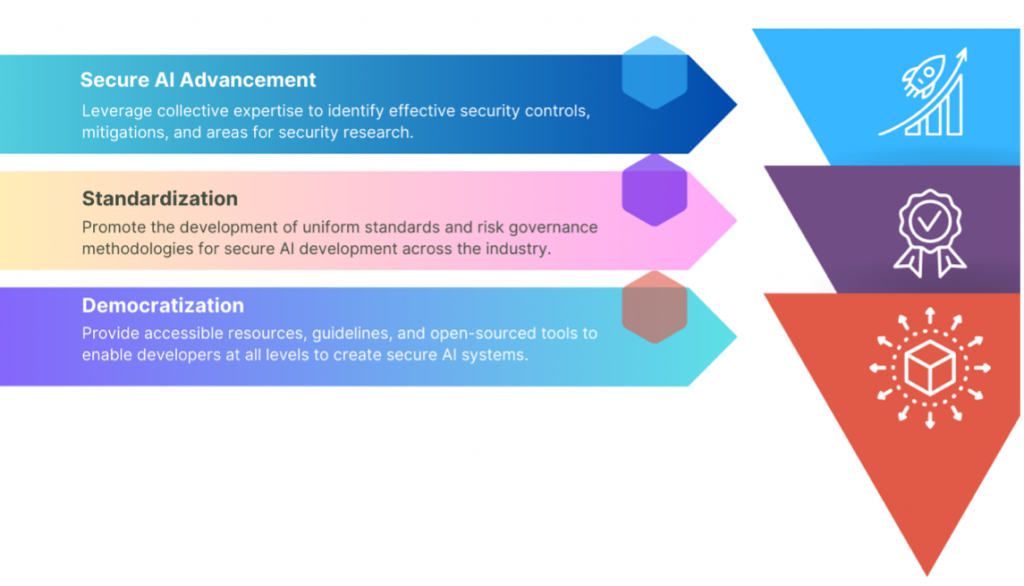

Targets of CoSAI

The next are the targets of CoSAI.

Key Workstreams

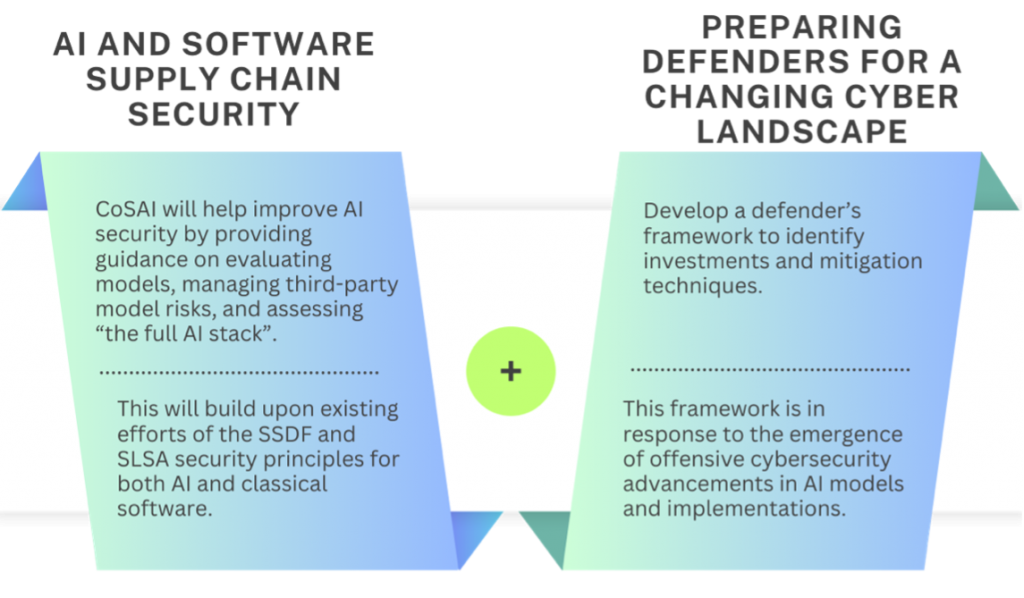

CoSAI will collaborate with business and academia to handle key AI safety points. Our preliminary workstreams embrace AI and software program provide chain safety and getting ready defenders for a altering cyber panorama.

CoSAI’s numerous stakeholders from main tech corporations put money into AI safety analysis, shares safety experience and finest practices, and builds technical open-source options and methodologies for safe AI improvement and deployment.

CoSAI is transferring ahead to create a safer AI ecosystem, constructing belief in AI applied sciences and making certain their safe integration throughout all organizations. The safety challenges arising from AI are difficult and dynamic. We’re assured that this coalition of expertise leaders is well-positioned to make a major impression in enhancing the safety of AI implementations.

We’d love to listen to what you assume. Ask a Query, Remark Beneath, and Keep Linked with Cisco Safety on social!

Cisco Safety Social Channels

Share: