Understanding methods to use the robots.txt file is essential for any web site’s search engine optimization technique. Errors on this file can influence how your web site is crawled and your pages’ search look. Getting it proper, however, can enhance crawling effectivity and mitigate crawling points.

Google just lately reminded web site house owners in regards to the significance of utilizing robots.txt to dam pointless URLs.

These embrace add-to-cart, login, or checkout pages. However the query is – how do you utilize it correctly?

On this article, we’ll information you into each nuance of methods to do exactly so.

What Is Robots.txt?

The robots.txt is an easy textual content file that sits within the root listing of your website and tells crawlers what needs to be crawled.

The desk beneath supplies a fast reference to the important thing robots.txt directives.

| Directive | Description |

| Person-agent | Specifies which crawler the foundations apply to. See person agent tokens. Utilizing * targets all crawlers. |

| Disallow | Prevents specified URLs from being crawled. |

| Permit | Permits particular URLs to be crawled, even when a dad or mum listing is disallowed. |

| Sitemap | Signifies the situation of your XML Sitemap by serving to serps to find it. |

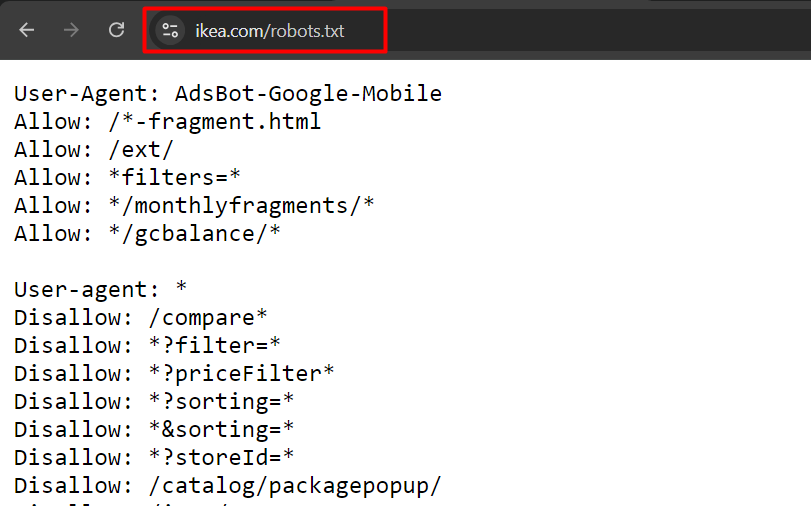

That is an instance of robotic.txt from ikea.com with a number of guidelines.

Instance of robots.txt from ikea.com

Instance of robots.txt from ikea.comWord that robots.txt doesn’t assist full common expressions and solely has two wildcards:

- Asterisks (*), which matches 0 or extra sequences of characters.

- Greenback signal ($), which matches the tip of a URL.

Additionally, be aware that its guidelines are case-sensitive, e.g., “filter=” isn’t equal to “Filter=.”

Order Of Priority In Robots.txt

When organising a robots.txt file, it’s necessary to know the order during which serps resolve which guidelines to use in case of conflicting guidelines.

They observe these two key guidelines:

1. Most Particular Rule

The rule that matches extra characters within the URL will likely be utilized. For instance:

Person-agent: *

Disallow: /downloads/

Permit: /downloads/free/On this case, the “Permit: /downloads/free/” rule is extra particular than “Disallow: /downloads/” as a result of it targets a subdirectory.

Google will enable crawling of subfolder “/downloads/free/” however block all the things else below “/downloads/.”

2. Least Restrictive Rule

When a number of guidelines are equally particular, for instance:

Person-agent: *

Disallow: /downloads/

Permit: /downloads/Google will select the least restrictive one. This implies Google will enable entry to /downloads/.

Why Is Robots.txt Necessary In search engine optimization?

Blocking unimportant pages with robots.txt helps Googlebot focus its crawl finances on helpful elements of the web site and on crawling new pages. It additionally helps serps save computing energy, contributing to raised sustainability.

Think about you will have an internet retailer with a whole lot of 1000’s of pages. There are sections of internet sites like filtered pages that will have an infinite variety of variations.

These pages don’t have distinctive worth, primarily comprise duplicate content material, and will create infinite crawl house, thus losing your server and Googlebot’s assets.

That’s the place robots.txt is available in, stopping search engine bots from crawling these pages.

In the event you don’t try this, Google could attempt to crawl an infinite variety of URLs with completely different (even non-existent) search parameter values, inflicting spikes and a waste of crawl finances.

When To Use Robots.txt

As a common rule, you need to all the time ask why sure pages exist, and whether or not they have something value for serps to crawl and index.

If we come from this precept, actually, we should always all the time block:

- URLs that comprise question parameters akin to:

- Inner search.

- Faceted navigation URLs created by filtering or sorting choices if they don’t seem to be a part of URL construction and search engine optimization technique.

- Motion URLs like add to wishlist or add to cart.

- Non-public elements of the web site, like login pages.

- JavaScript recordsdata not related to web site content material or rendering, akin to monitoring scripts.

- Blocking scrapers and AI chatbots to stop them from utilizing your content material for his or her coaching functions.

Let’s dive into examples of how you should utilize robots.txt for every case.

1. Block Inner Search Pages

The most typical and completely mandatory step is to dam inner search URLs from being crawled by Google and different serps, as nearly each web site has an inner search performance.

On WordPress web sites, it’s often an “s” parameter, and the URL seems like this:

https://www.instance.com/?s=googleGary Illyes from Google has repeatedly warned to dam “motion” URLs as they’ll trigger Googlebot to crawl them indefinitely even non-existent URLs with completely different mixtures.

Right here is the rule you should utilize in your robots.txt to dam such URLs from being crawled:

Person-agent: *

Disallow: *s=*- The Person-agent: * line specifies that the rule applies to all internet crawlers, together with Googlebot, Bingbot, and so on.

- The Disallow: *s=* line tells all crawlers to not crawl any URLs that comprise the question parameter “s=.” The wildcard “*” means it could match any sequence of characters earlier than or after “s= .” Nevertheless, it won’t match URLs with uppercase “S” like “/?S=” since it’s case-sensitive.

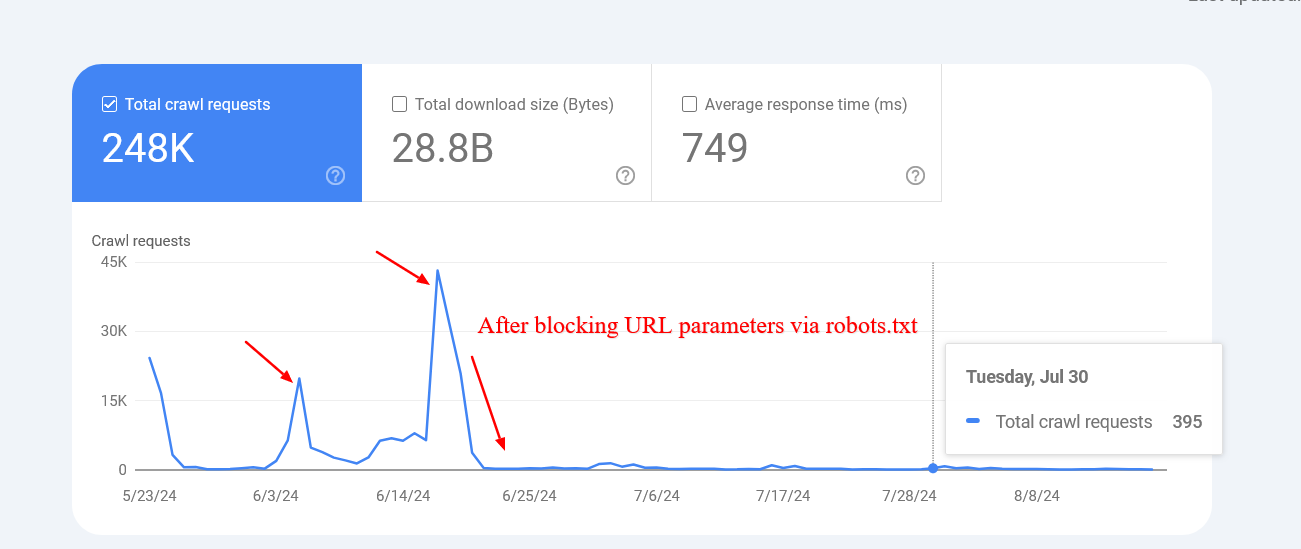

Right here is an instance of an internet site that managed to drastically cut back the crawling of non-existent inner search URLs after blocking them through robots.txt.

Screenshot from crawl stats report

Screenshot from crawl stats reportWord that Google could index these blocked pages, however you don’t want to fret about them as they are going to be dropped over time.

2. Block Faceted Navigation URLs

Faceted navigation is an integral a part of each ecommerce web site. There might be circumstances the place faceted navigation is a part of an search engine optimization technique and geared toward rating for common product searches.

For instance, Zalando makes use of faceted navigation URLs for colour choices to rank for common product key phrases like “grey t-shirt.”

Nevertheless, most often, this isn’t the case, and filter parameters are used merely for filtering merchandise, creating dozens of pages with duplicate content material.

Technically, these parameters are usually not completely different from inner search parameters with one distinction as there could also be a number of parameters. You must be sure you disallow all of them.

For instance, you probably have filters with the next parameters “sortby,” “colour,” and “worth,” it’s possible you’ll use this algorithm:

Person-agent: *

Disallow: *sortby=*

Disallow: *colour=*

Disallow: *worth=*Based mostly in your particular case, there could also be extra parameters, and it’s possible you’ll want so as to add all of them.

What About UTM Parameters?

UTM parameters are used for monitoring functions.

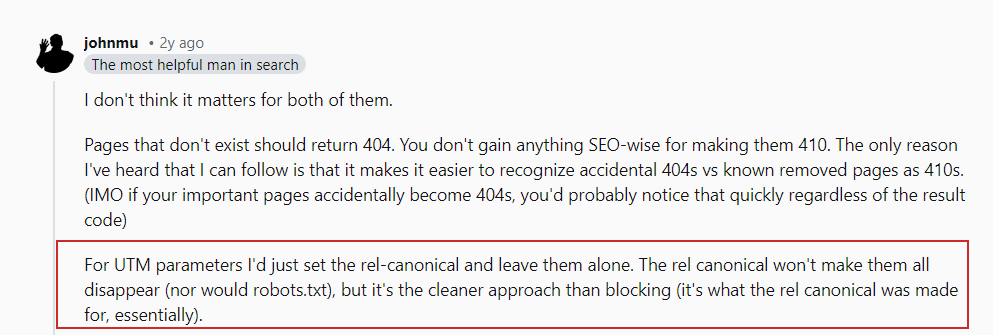

As John Mueller said in his Reddit put up, you don’t want to fret about URL parameters that hyperlink to your pages externally.

John Mueller on UTM parameters

John Mueller on UTM parametersSimply be sure to dam any random parameters you utilize internally and keep away from linking internally to these pages, e.g., linking out of your article pages to your search web page with a search question web page “https://www.instance.com/?s=google.”

3. Block PDF URLs

Let’s say you will have plenty of PDF paperwork, akin to product guides, brochures, or downloadable papers, and also you don’t need them crawled.

Right here is an easy robots.txt rule that may block search engine bots from accessing these paperwork:

Person-agent: *

Disallow: /*.pdf$The “Disallow: /*.pdf$” line tells crawlers to not crawl any URLs that finish with .pdf.

Through the use of /*, the rule matches any path on the web site. Consequently, any URL ending with .pdf will likely be blocked from crawling.

When you’ve got a WordPress web site and wish to disallow PDFs from the uploads listing the place you add them through the CMS, you should utilize the next rule:

Person-agent: *

Disallow: /wp-content/uploads/*.pdf$

Permit: /wp-content/uploads/2024/09/allowed-document.pdf$You possibly can see that we’ve conflicting guidelines right here.

In case of conflicting guidelines, the extra particular one takes precedence, which suggests the final line ensures that solely the precise file positioned in folder “wp-content/uploads/2024/09/allowed-document.pdf” is allowed to be crawled.

4. Block A Listing

Let’s say you will have an API endpoint the place you submit your knowledge from the shape. It’s doubtless your type has an motion attribute like motion=”/type/submissions/.”

The problem is that Google will attempt to crawl that URL, /type/submissions/, which you doubtless don’t need. You possibly can block these URLs from being crawled with this rule:

Person-agent: *

Disallow: /type/By specifying a listing within the Disallow rule, you might be telling the crawlers to keep away from crawling all pages below that listing, and also you don’t want to make use of the (*) wildcard anymore, like “/type/*.”

Word that you need to all the time specify relative paths and by no means absolute URLs, like “https://www.instance.com/type/” for Disallow and Permit directives.

Be cautious to keep away from malformed guidelines. For instance, utilizing /type with out a trailing slash can even match a web page /form-design-examples/, which can be a web page in your weblog that you simply wish to index.

Learn: 8 Widespread Robots.txt Points And How To Repair Them

5. Block Person Account URLs

When you’ve got an ecommerce web site, you doubtless have directories that begin with “/myaccount/,” akin to “/myaccount/orders/” or “/myaccount/profile/.”

With the highest web page “/myaccount/” being a sign-in web page that you simply wish to be listed and located by customers in search, it’s possible you’ll wish to disallow the subpages from being crawled by Googlebot.

You need to use the Disallow rule together with the Permit rule to dam all the things below the “/myaccount/” listing (besides the /myaccount/ web page).

Person-agent: *

Disallow: /myaccount/

Permit: /myaccount/$

And once more, since Google makes use of probably the most particular rule, it should disallow all the things below the /myaccount/ listing however enable solely the /myaccount/ web page to be crawled.

Right here’s one other use case of mixing the Disallow and Permit guidelines: in case you will have your search below the /search/ listing and need it to be discovered and listed however block precise search URLs:

Person-agent: *

Disallow: /search/

Permit: /search/$

6. Block Non-Render Associated JavaScript Information

Each web site makes use of JavaScript, and plenty of of those scripts are usually not associated to the rendering of content material, akin to monitoring scripts or these used for loading AdSense.

Googlebot can crawl and render an internet site’s content material with out these scripts. Due to this fact, blocking them is protected and really useful, because it saves requests and assets to fetch and parse them.

Beneath is a pattern line that’s disallowing pattern JavaScript, which incorporates monitoring pixels.

Person-agent: *

Disallow: /property/js/pixels.js7. Block AI Chatbots And Scrapers

Many publishers are involved that their content material is being unfairly used to coach AI fashions with out their consent, and so they want to stop this.

#ai chatbots

Person-agent: GPTBot

Person-agent: ChatGPT-Person

Person-agent: Claude-Internet

Person-agent: ClaudeBot

Person-agent: anthropic-ai

Person-agent: cohere-ai

Person-agent: Bytespider

Person-agent: Google-Prolonged

Person-Agent: PerplexityBot

Person-agent: Applebot-Prolonged

Person-agent: Diffbot

Person-agent: PerplexityBot

Disallow: /#scrapers

Person-agent: Scrapy

Person-agent: magpie-crawler

Person-agent: CCBot

Person-Agent: omgili

Person-Agent: omgilibot

Person-agent: Node/simplecrawler

Disallow: /Right here, every person agent is listed individually, and the rule Disallow: / tells these bots to not crawl any a part of the location.

This, apart from stopping AI coaching in your content material, will help cut back the load in your server by minimizing pointless crawling.

For concepts on which bots to dam, it’s possible you’ll wish to test your server log recordsdata to see which crawlers are exhausting your servers, and keep in mind, robots.txt doesn’t stop unauthorized entry.

8. Specify Sitemaps URLs

Together with your sitemap URL within the robots.txt file helps serps simply uncover all of the necessary pages in your web site. That is carried out by including a particular line that factors to your sitemap location, and you’ll specify a number of sitemaps, every by itself line.

Sitemap: https://www.instance.com/sitemap/articles.xml

Sitemap: https://www.instance.com/sitemap/information.xml

Sitemap: https://www.instance.com/sitemap/video.xmlNot like Permit or Disallow guidelines, which permit solely a relative path, the Sitemap directive requires a full, absolute URL to point the situation of the sitemap.

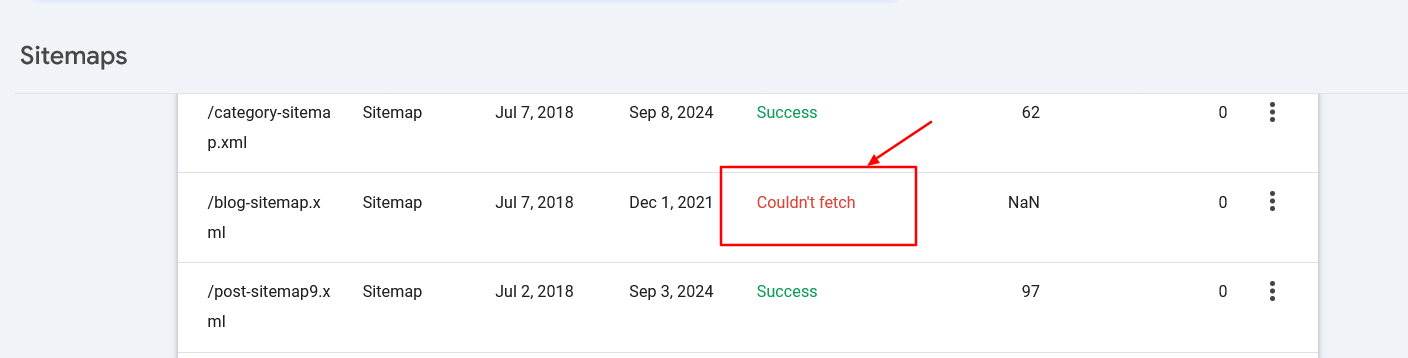

Make sure the sitemaps’ URLs are accessible to serps and have correct syntax to keep away from errors.

Sitemap fetch error in search console

Sitemap fetch error in search console9. When To Use Crawl-Delay

The crawl-delay directive in robots.txt specifies the variety of seconds a bot ought to wait earlier than crawling the subsequent web page. Whereas Googlebot doesn’t acknowledge the crawl-delay directive, different bots could respect it.

It helps stop server overload by controlling how continuously bots crawl your website.

For instance, if you would like ClaudeBot to crawl your content material for AI coaching however wish to keep away from server overload, you may set a crawl delay to handle the interval between requests.

Person-agent: ClaudeBot

Crawl-delay: 60This instructs the ClaudeBot person agent to attend 60 seconds between requests when crawling the web site.

After all, there could also be AI bots that don’t respect crawl delay directives. In that case, it’s possible you’ll want to make use of a internet firewall to price restrict them.

Troubleshooting Robots.txt

When you’ve composed your robots.txt, you should utilize these instruments to troubleshoot if the syntax is right or in case you didn’t unintentionally block an necessary URL.

1. Google Search Console Robots.txt Validator

When you’ve up to date your robots.txt, you need to test whether or not it incorporates any error or unintentionally blocks URLs you wish to be crawled, akin to assets, photographs, or web site sections.

Navigate Settings > robots.txt, and you will see that the built-in robots.txt validator. Beneath is the video of methods to fetch and validate your robots.txt.

2. Google Robots.txt Parser

This parser is official Google’s robots.txt parser which is utilized in Search Console.

It requires superior expertise to put in and run in your native laptop. However it’s extremely really useful to take time and do it as instructed on that web page as a result of you may validate your adjustments within the robots.txt file earlier than importing to your server in step with the official Google parser.

Centralized Robots.txt Administration

Every area and subdomain should have its personal robots.txt, as Googlebot doesn’t acknowledge root area robots.txt for a subdomain.

It creates challenges when you will have an internet site with a dozen subdomains, because it means you need to preserve a bunch of robots.txt recordsdata individually.

Nevertheless, it’s attainable to host a robots.txt file on a subdomain, akin to https://cdn.instance.com/robots.txt, and arrange a redirect from https://www.instance.com/robots.txt to it.

You are able to do vice versa and host it solely below the basis area and redirect from subdomains to the basis.

Engines like google will deal with the redirected file as if it have been positioned on the basis area. This method permits centralized administration of robots.txt guidelines for each your foremost area and subdomains.

It helps make updates and upkeep extra environment friendly. In any other case, you would wish to make use of a separate robots.txt file for every subdomain.

Conclusion

A correctly optimized robots.txt file is essential for managing a web site’s crawl finances. It ensures that serps like Googlebot spend their time on helpful pages reasonably than losing assets on pointless ones.

Then again, blocking AI bots and scrapers utilizing robots.txt can considerably cut back server load and save computing assets.

Ensure you all the time validate your adjustments to keep away from surprising crawability points.

Nevertheless, do not forget that whereas blocking unimportant assets through robots.txt could assist improve crawl effectivity, the primary elements affecting crawl finances are high-quality content material and web page loading pace.

Completely satisfied crawling!

Extra assets:

Featured Picture: BestForBest/Shutterstock